Why I Built Gluon: From tmux Chaos to AI Agent Orchestration

Part 1 of 4 in Gluon: Building an AI Agent Orchestrator series

Five Panes, Zero Visibility

Alt-right. Alt-right. Alt-right. Alt-right.

Five tmux panes. Five Claude Code sessions running simultaneously — homepage redesign, auth bug, data pipeline refactor, API docs, module tests. I'm cycling through them like a pit crew chief checking five cars at once, except I can't actually see any of the dashboards.

One pane is hanging. Did it crash, or is it thinking? Another threw an error three minutes ago while I was reading output in a third. The costs are climbing — $0.47 here, $0.82 there — and I genuinely have no idea what today's total is.

Which session is working on what? Did that agent finish or get stuck? Is it still running?

I couldn't answer any of these without hunting through scrollback. No single view of the work. No way to see "three tasks done, one running, one blocked." Just context-switching hell dressed up as productivity.

Nothing was wrong with the agents, or with how I was driving them. I just had no way to see them — five black boxes, costs climbing, no dashboard anywhere. That was the first crack: the agents had outrun the tooling around them.

The Management Problem Nobody Was Solving

I've spent years managing human engineering teams. The patterns are old and proven: assign work, track progress, unblock blockers, measure output. When things get chaotic, you pull up a project board — Kanban, Jira, whatever — and suddenly the mess has shape. Visibility fixes half the problems. Coordination fixes the rest.

Staring at those five tmux panes, the thought arrived fully formed: these agents need what human teams need.

What they needed wasn't better tooling. It was management — visibility, status tracking, cost awareness, coordination across parallel work. The infrastructure we'd built for human teams over decades simply didn't exist for AI agents yet.

The market was signaling urgency. 57% of organizations are already running agents in production. Gartner tracked a 1,445% surge in multi-agent system inquiries. Rajeev Rajan, CTO at Atlassian, said something that stuck with me: "Some teams at Atlassian have engineers basically writing zero lines of code: it's all agents, or orchestration of agents."

Agents were everywhere. The tools to manage them were nowhere.

I'd been watching Vibe Kanban, which showed the conceptual clarity of what was possible — a Kanban board treating AI agents like sprint team members, running them in parallel in isolated git worktrees. Beautiful and simple. But I wanted to go deeper. What if orchestration wasn't just about visibility, but cost tracking, session persistence, autonomous loops, and safety mechanisms? What if we built something that solved the production problem?

Nobody had built that. So I did.

AI Building Its Own Manager

Here's the part that still makes me pause. I used Claude Code to build Gluon — the orchestrator that manages Claude Code agents. AI building systems to orchestrate AI. The recursion isn't lost on me.

A long weekend of intense iteration. Python 3.12, FastAPI backend, React dashboard. Five layers: interface (CLI, web, bots), bot core, orchestration, services, and an agent layer wrapping Claude's SDK. Not overengineered. Structured.

Two or three days. Done.

Claude Code's capability to handle 200-file edits with 200K token context in 90 seconds made this possible. That speed compounds. Rapid feedback loops: try an idea, see it fail, refactor, ship — in cycles that used to take weeks.

But the speed wasn't the real enabler. The bootstrap worked because the context was already structured. When the first real feature gets implemented, AI starts with knowledge about the product, architecture, tech choices, and quality requirements. It doesn't start cold. I understood the problem deeply. Claude Code understood it too. The handoff was frictionless.

That frictionless handoff is exactly why Gluon exists. And it's the clearest signal I've seen about where software engineering is heading.

What Gluon Actually Is

Gluon isn't trying to replace Claude Code or rebuild agents. It wraps the Claude Agent SDK with the orchestration layer the SDK doesn't provide.

Think of it as a project manager for AI agents.

The architecture is straightforward:

Rendering diagram...

Submit a task via CLI, web, or chat bot. The orchestrator creates a session, spins up a GluonAgent, streams responses in real-time. Cost tracked at every step. The agent runs in its own git worktree — isolated, sandboxed, never touching main. When done, the session persists. Resume it later with full context intact.

Here's what this solves — the exact problems from those five tmux panes:

Visibility: Every agent is visible on a Kanban board. Queue, Running, Review, Done. No more guessing.

Isolation: Each task gets its own git branch and worktree. No cross-contamination. No fights over resources.

Session persistence: Multi-day workflows work because the context survives. Stop an agent, come back tomorrow, resume with everything intact.

And then there's the part that changes everything.

Ralph Loop: When Agents Drive Themselves

Gluon's real power emerges when you go autonomous. Ralph Loop is the self-driving mode — the agent keeps working toward completion without human intervention.

That should make you slightly nervous. Good. It made me nervous too.

Safety is built into the architecture. The circuit breaker uses a state machine: CLOSED (normal) -> HALF_OPEN (warning) -> OPEN (stopped). If an agent gets stuck — same error five times, no progress for five iterations — the circuit opens and the loop stops. You get a notification. A human reviews. Then you decide what's next.

The loop detects completion through multiple signals. The agent can emit a RALPH_STATUS block. Or it looks for keywords. Or it scans TODO files. When completion confidence reaches 60%, the loop exits to Review. A human takes a look. If good, it goes to Done. If something's wrong, ask clarifying questions and the agent resumes.

A 50-line feature? Ralph typically needs 10-20 iterations. Hands off. Just watching.

That's the shift: from doing the work to watching the work get done.

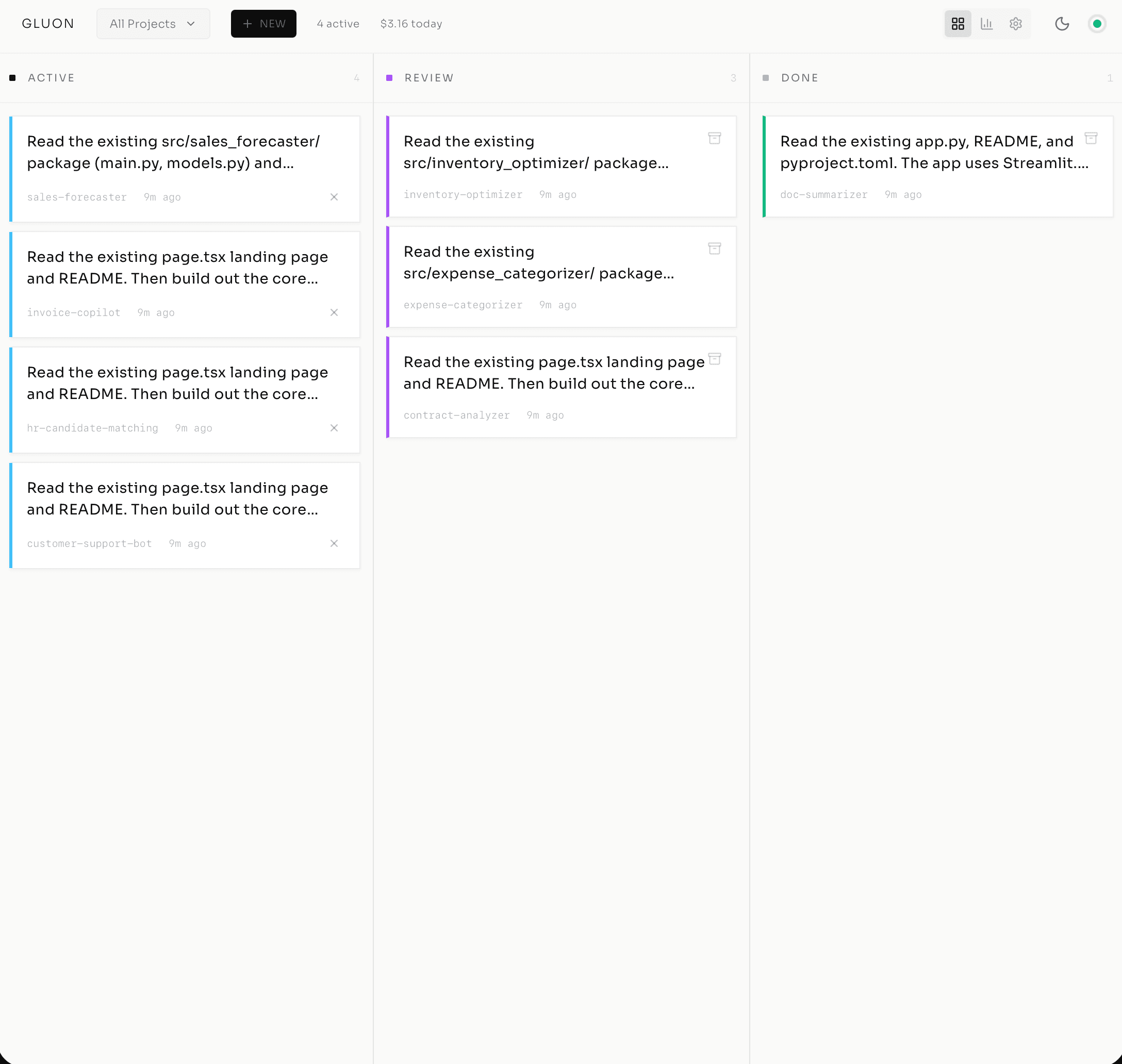

The Kanban Workspace

The web dashboard is the nerve center. Four columns: Queue, Running, Review, Done.

Everything visible. Status clear. Cost tracked. Drag and drop to manage the workflow. Running tasks have colored health dots: green (healthy), yellow (slow), orange (looping), red (stuck).

Remember those five tmux panes? This is their replacement. One screen. All five agents. Every question I couldn't answer before — which session is working on what, how much I've spent, whether something is stuck — answered at a glance.

Part 2 explores this cockpit in depth.

The Release

Gluon is live on GitHub at github.com/carrotly-ai/gluon-agent under the MIT license. Version 0.8.0. Production-ready. Actively maintained.

What's included: dashboard, CLI, bot transports (Telegram, Discord), Ralph Loop, formulas for multi-step workflows, supervision systems, circuit breakers. Real infrastructure.

80+ REST endpoints. 40+ MCP tools. 50+ CLI commands. Run it locally, deploy in Docker, connect to your chat workspace. Pick your workflow.

Why Now, Why Open

The timing matters. The AI agents market is $10.91 billion in 2026 and projected to hit $182.97 billion by 2033 — a 49.6% CAGR. This isn't a trend line. It's infrastructure being built right now.

Orchestration is coming either way. The open question is whether it gets built as open infrastructure or locked inside proprietary walled gardens.

I'm betting on open infrastructure. Community-driven orchestration compounds collective intelligence instead of concentrating it. It evolves at the pace of the ecosystem. And honestly, the problems I hit with those five tmux panes are universal. Every developer running multiple AI agents is hitting the same wall I did.

What's Next

This is Part 1 of 4. Part 2 walks through the dashboard and real workflows. Part 3 goes deep on Ralph Loop safety, circuit breaker patterns, and production autonomy. Part 4 tackles team infrastructure — Docker, multi-project coordination, cost management, governance.

The next decade of this work is less about writing code and more about directing it well — judgment, taste, and the discipline to catch what the agents get wrong.

If you're running three or more AI agents right now and can't answer "what's each one doing?" and "what have I spent today?" — that's the gap Gluon fills.

Those five blind tmux panes are what started it. Everything that follows in this series is the instrumentation I wish I'd had that night.

Series Navigation

- Post 1: From tmux Chaos to AI Agent Orchestration (you are here)

- Post 2: Inside the Cockpit

- Post 3: Ralph Loop — Autonomous Execution

- Post 4: From Solo Tool to Team Infrastructure

The Cutler.sg Newsletter

Weekly notes on AI, engineering leadership, and building in Singapore. No fluff.

Ralph Loop: Teaching AI Agents to Work Autonomously (Without Burning Your Budget)

How Gluon's Ralph Loop enables autonomous Claude execution with built-in safety rails — circuit breakers, multi-signal completion detection, and cost controls that scale from simple tasks to complex workflows.

Manager Mode: When AI Does the Work, Everyone Becomes Middle Management

AI is silently promoting every knowledge worker to middle management — without the title, the training, or the pay. This is what that shift actually looks like from a Singapore desk.

Inside the Cockpit: How Gluon Turns AI Agents into a Managed Workflow

Step into the cockpit of multi-agent orchestration — real-time monitoring, git isolation, cost tracking, and parallel workflows across 4 agents.