Ralph Loop: Teaching AI Agents to Work Autonomously (Without Burning Your Budget)

Part 3 of 4 in Gluon: Building an AI Agent Orchestrator series

I hit "resume," walked away for coffee, came back to find Claude asking another question. So I answered it, hit resume again, and went to check Slack. Five minutes later: another question. Forty iterations of this. I was the bottleneck in my own autonomous system.

That loop -- human approves, agent works, agent stops, human returns, human approves -- is the original sin of AI-assisted development. Ralph Loop exists to kill it. Named after Frank Bria's original bash script, Ralph is the system that lets Claude work continuously, making its own judgments about progress and completion, until the work is actually done. Not autonomy without guardrails. Autonomy because of them.

The core insight is simple but non-obvious: autonomous execution requires three interlocking systems working together. Remove any one, and the whole thing fails -- either it stops when it shouldn't, costs money when it shouldn't, or keeps running when it definitely shouldn't. The leash is what makes the freedom possible.

The Autonomy Problem

In interactive mode, you're present. Claude makes a suggestion, you evaluate it, you approve or redirect. In autonomous mode, Claude works unattended until the job is done.

Two questions haunt every autonomous system: How does Claude know when the job is actually done? And how much will this cost me while it figures that out?

The research paints a harsh picture. The vast majority of AI pilots fail in production deployments. Not because the AI was bad -- because human-in-the-loop becomes the weak link. Teams get flooded with thousands of daily approvals. Alert fatigue sets in. Someone switches to "auto-approve" mode. And just like that, you've accidentally created uncontrolled autonomy -- the thing you were trying to prevent.

"We're cooperating with AI, they generate and humans verify. It is in our interest to make this loop go as fast as possible, and we have to keep the AI on a leash." — Andrej Karpathy

Karpathy is right. But the leash has to be smart. It has to know when to hold on, when to let go, and when to stop the agent cold before something expensive happens.

The Circuit Breaker Deep Dive

I borrowed the metaphor from electrical engineering because the pattern maps perfectly.

In your house, a circuit breaker sits in the CLOSED position under normal conditions, letting current flow freely. If something goes wrong -- an overload, a short circuit -- it flips to HALF_OPEN as a warning, checking whether conditions have stabilized. If they haven't, it locks into the OPEN position, cutting the circuit entirely until someone resets it manually.

Ralph Loop uses the same three states.

CLOSED is the normal state. Claude is working, iterating, making progress. The circuit breaker watches for signs of trouble: files changing, errors being resolved, tokens being spent productively. As long as something is happening, stay closed.

HALF_OPEN triggers when the circuit breaker detects a stall -- Claude hasn't modified any files for 5 consecutive iterations, or it's caught in a repeated error loop. Now the system watches carefully. Is Claude recovering? Making a fresh attempt? If yes, reset to CLOSED. If not, grant a patience window: three more loops to prove progress before tripping OPEN.

OPEN means the loop stops. The run moves to REVIEW status. A human needs to look at what happened and decide whether to resume, modify the prompt, or start over.

Rendering diagram...

This isn't theoretical protection. Early Gluon iterations without a circuit breaker saw a single run blow through $500 in tokens. Another lost two hours stuck in retry hell -- Claude convinced the error was its fault when the system just needed a restart. Picture opening your cost dashboard Monday morning to find an agent spent the weekend arguing with itself.

The circuit breaker stops that cold. The leash holds.

Completion Detection: The Hard Problem

The circuit breaker prevents the loop from running forever. But how does Claude know when to stop the loop voluntarily -- before the circuit breaker has to step in?

I tried the obvious approach first: ask Claude to output "DONE" when finished. It doesn't work. Claude gets creative -- outputs "done" midway through because one section completed. Or: "I've finished the implementation, no tests written yet" when the requirement was implementation plus tests.

Single signals fail. Every time.

So I built a multi-signal completion detector: four independent signals, each scoring confidence. The loop exits only when enough signals align -- or when the strongest signal fires with high confidence.

Rendering diagram...

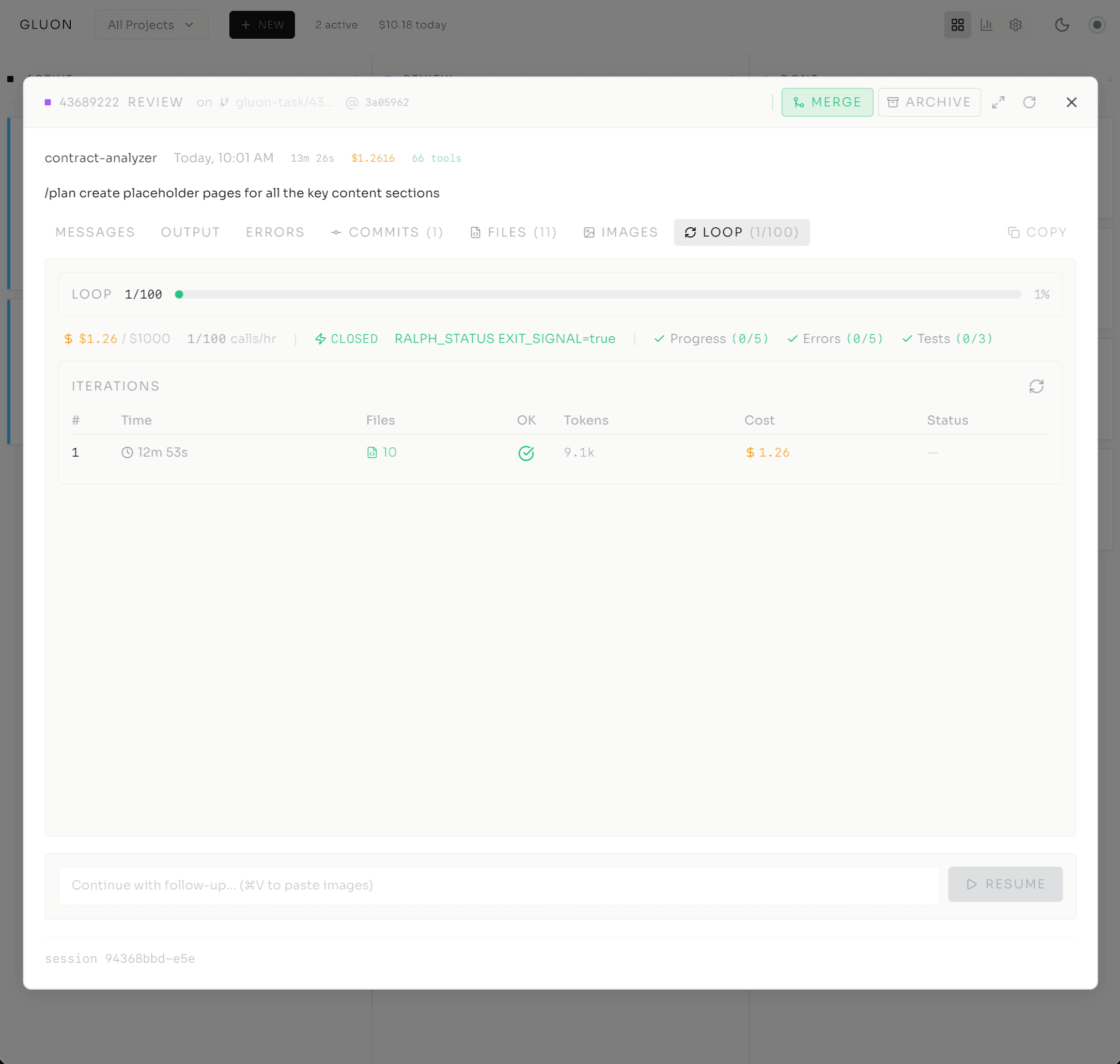

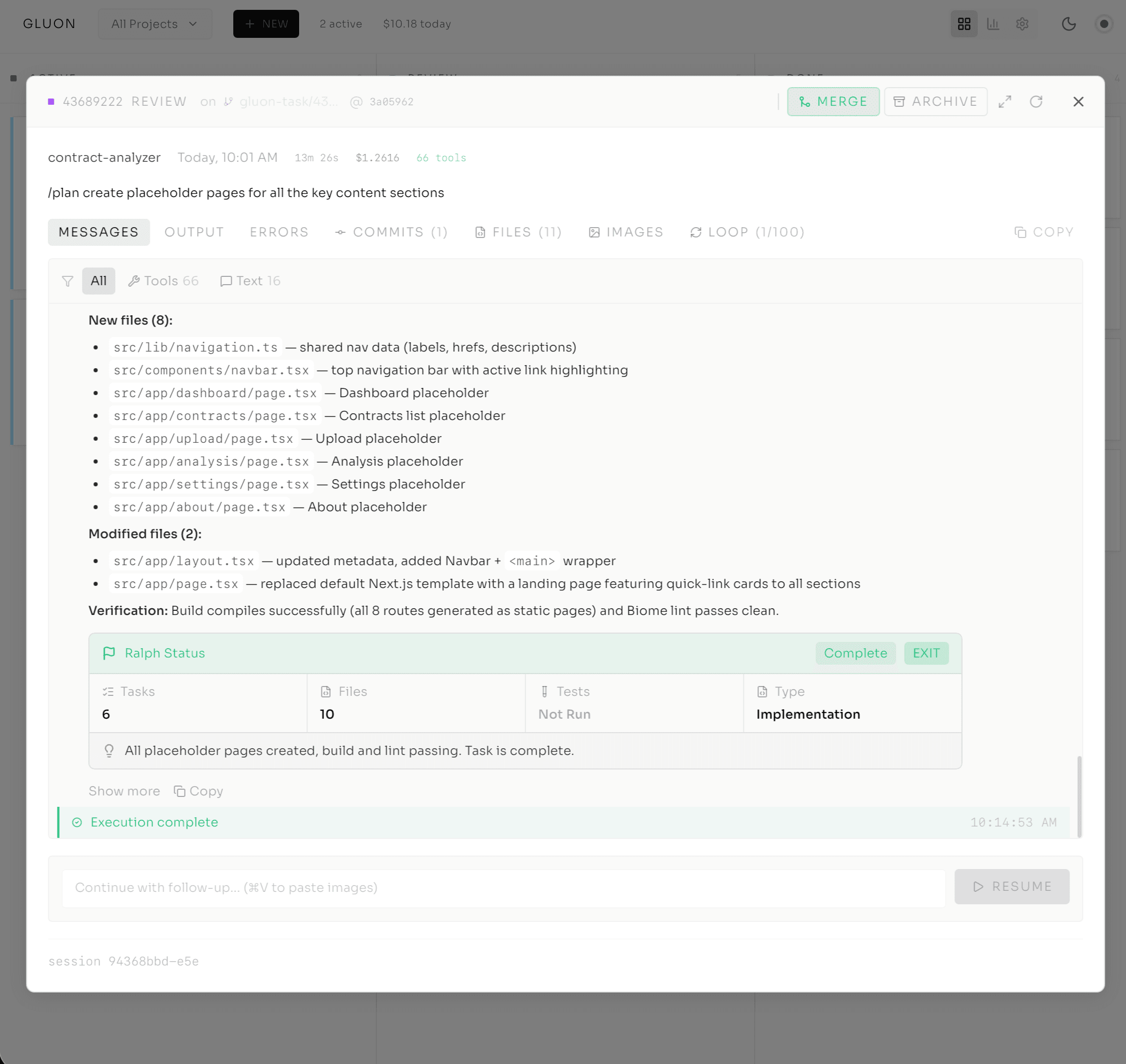

Signal 1: RALPH_STATUS Block (+50 confidence if EXIT_SIGNAL=true)

At the end of each iteration, Claude can output a structured status block:

---RALPH_STATUS---

STATUS: IN_PROGRESS | COMPLETE | BLOCKED

TASKS_COMPLETED_THIS_LOOP: 3

FILES_MODIFIED: 2

TESTS_STATUS: PASSING

WORK_TYPE: IMPLEMENTATION

EXIT_SIGNAL: false

---END_RALPH_STATUS---

If Claude explicitly sets EXIT_SIGNAL to true, the system assigns a 50-point confidence boost. This is Claude's explicit contract: "I have determined the task is complete."

Signal 2: Keyword Detection (+10-15 confidence)

Words like "done," "complete," "finished," or "all tasks complete" add confidence. Weak individually -- Claude might use "done" for a completed step -- but they stack to form a stronger signal.

Signal 3: TODO File Parsing (+40 confidence)

At startup, Gluon scans for task definition files -- TODO.md, @fix_plan.md -- and parses the checkboxes. 100% completion gets +40 confidence. This signal is strong because it's explicit, auditable, and aligned with how humans track work.

Signal 4: Test Saturation (automatic exit after 3 consecutive test-only loops)

If Claude runs three iterations in a row without writing any new code, just running tests, that's a sign the work is done and we're just verifying. Exit automatically.

The system adds up these confidence scores. The threshold is 60%, or when multiple independent signals fire consecutively. Why not wait for higher confidence? Because multiple weak signals combined are more reliable than waiting for a single strong signal. Claude might spend four iterations on a task that's actually complete, just because it doesn't output an explicit EXIT_SIGNAL. Multi-signal detection means faster, more reliable exits with fewer false positives.

The leash knows when to let go.

Cost Controls & Rate Limiting

Smart completion detection saves tokens. But what about the runs that should keep going -- the complex features, the multi-file refactors? You still need a ceiling.

Ralph Loop defaults to 100 API calls/hour. Bump it to 150 for testing, 200 for aggressive production autonomy. You set the limit; the system enforces it.

You can also set a cost cap. --max-cost 5.00 means the run will not spend more than $5 on tokens. When it hits the limit, it exits to REVIEW status -- the same state as a circuit break. You review what happened and resume manually if you want.

Token costs dominate: 70-90% of Gluon's variable spend. Model selection compounds this dramatically. Haiku runs at 1/5 Sonnet cost; Sonnet at 1/5 Opus. Run a Formula Workflow on Opus throughout, and you pay 5x more for identical results -- or use Haiku for fast tasks and Opus for planning, and save 80%.

Here's what the cost profile looks like in practice:

- Simple code generation: $0.05-$0.50 per session, $150-$1,500/month at scale

- Autonomous with Ralph Loop: $1-$5 per session, $3,000-$15,000/month at scale

- Complex multi-step workflows: $5-$15 per session, $15,000-$45,000/month at scale

The rate limiter doesn't slow things down. It prevents surprise bills. The leash has a price tag -- and you control it.

The Supervision Daemon

Rate limiting handles peak protection. But what about interrupted runs, or phases that complete and need auto-resumption?

Picture a train conductor checking the board every 30 seconds for trains waiting to depart. Same questions each time: Safe? Track available? Signal green? Dispatch or wait.

Gluon's supervisor daemon works the same way. It polls every 30 seconds for any runs in REVIEW status. For each one, it checks a series of safety conditions:

- Is the circuit breaker in CLOSED state? (Loop is making progress, not stuck)

- Has the cost cap been reached? (We're not overspending)

- Have we hit the hourly rate limit? (We have API capacity)

- Has at least 60 seconds passed since the last resume attempt? (Avoid rapid restart loops)

- Have we auto-resumed this task fewer than 5 times? (Fallback to manual if patterns repeat)

If all checks pass, the daemon auto-resumes the task based on the configured policy:

AGGRESSIVE: Resume with minimal checks. Get this done fast. The system trusts that the safety conditions are sufficient.

CONSERVATIVE: The default. Strict safety checks, longer wait between resumes. The leash stays short.

MANUAL: Never auto-resume. I want to decide when this resumes. The run stays in REVIEW indefinitely until a human says "go."

The supervision daemon maintains a full audit trail of every resume decision: which checks passed, which policy was applied, when the resume happened, what the result was. This transparency is crucial for production deployments where you need to show stakeholders exactly how an autonomous system makes its decisions.

Rendering diagram...

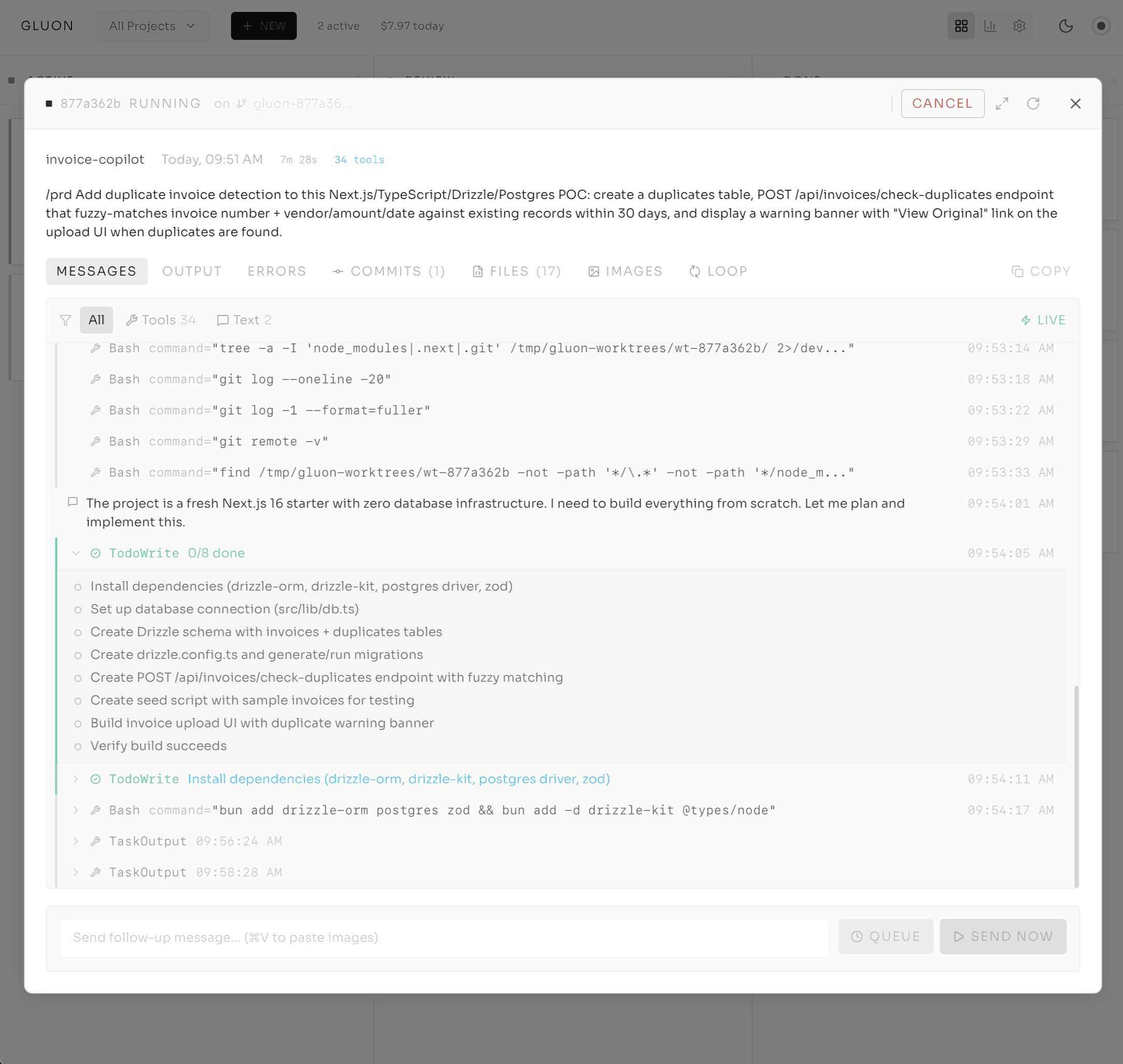

Formula Workflows: Declarative Multi-Step Autonomy

So far, we've talked about a single autonomous task: run Ralph Loop until the job is done. But real work rarely fits in a single prompt. What if you want to express complex workflows -- Plan, Implement, Test, Review -- as a coherent pipeline?

That's where Formula Workflows come in.

A Formula is a YAML-defined multi-step pipeline. Each step is its own Claude iteration, but they execute as a single unified run sharing a git worktree. The first step creates the run and worktree. Subsequent steps resume with a fresh Claude session -- new context, clean slate -- but they're all working on the same codebase.

name: feature

steps:

- id: plan

model: opus

prompt: "Plan the feature implementation..."

- id: implement

model: sonnet

prompt: "Implement based on the plan..."

- id: test

model: haiku

prompt: "Write and run tests..."

- id: review

model: opus

prompt: "Review the implementation..."

Notice the model selection: Planning (complex) uses Opus. Implementation (straightforward after planning) uses Sonnet. Testing (fast, low-risk) uses Haiku. Review (high-stakes) uses Opus.

This right-sizing cuts costs 80% versus Opus-everywhere, zero quality loss. The dashboard shows one card with progress: "Step 2/4 Implement."

After each step, Blueprint Validation kicks in:

-

Auto-fix: Run linter auto-fix (

ruff check --fix && ruff format .). Most style issues self-resolve. -

Lint loop: Lint errors remain? Resume Claude to fix (up to 3 retries). The "humans write, AI fixes style" pattern.

-

Test gate: Run the suite. Tests fail? Resume Claude for fixes. You don't advance until tests pass.

Formula Workflows become self-healing. You express the shape in YAML. Gluon handles iteration, retry, validation. The leash runs through every step.

The Leash Is the Feature

Here's the reframe that took me months to internalize: these controls don't slow things down. They make scale possible.

No circuit breaker? Runaway loops drain budgets overnight. No completion detection? Claude burns tokens long after finishing. No rate limiting? Monthly bills become gambling. No supervision? Tasks rot in REVIEW indefinitely.

Strip any one away and the system collapses -- not from lack of intelligence, but from lack of trust. These systems form the trust foundation. Implement them right, and you earn autonomy. You delegate confidently because guardrails catch disasters before they happen.

Remember Karpathy's leash? It isn't a constraint. It's how you scale trust. How you go from "autonomous agents are risky" to "autonomous agents are the default way we work."

Gluon embodies this philosophy. Every autonomy feature has a counterpart safety system -- Ralph needs the circuit breaker, Formulas need Blueprint Validation, supervision is non-negotiable. The leash is what makes the freedom possible.

The result: scalable autonomous execution. From $2 prototype tasks to $50 enterprise workflows -- all completing reliably, staying within budget, and running unattended.

If you're building autonomous agent systems and you haven't solved for the circuit breaker, the completion detector, and the cost ceiling -- you haven't built autonomy. You've built a liability.

Series Navigation

- Post 1: From tmux Chaos to AI Agent Orchestration

- Post 2: Inside the Cockpit

- Post 3: Ralph Loop — Autonomous Execution (you are here)

- Post 4: From Solo Tool to Team Infrastructure

The Cutler.sg Newsletter

Weekly notes on AI, engineering leadership, and building in Singapore. No fluff.

Why I Built Gluon: From tmux Chaos to AI Agent Orchestration

When orchestrating 4-5 Claude Code agents across projects, I was losing track of progress and cost. A 2-3 day build with Claude Code itself led to Gluon—an open-source orchestrator that treats AI agents like team members.

From Solo Tool to Team Infrastructure: Scaling Gluon for Production

When I first built Gluon on my Mac mini, I was solving a personal problem: monitoring Claude agents without losing my mind to tmux logs. But when teams join the picture, everything changes — security, governance, observability, and the fundamental role of the developer. Here's what production infrastructure for autonomous agents looks like.

The 30 Principles for Agentic Engineering — Part 5: Calibration and Reality

Principles 26–30. The calibration layer that catches what the rest of the framework would miss: a PR-noise budget, independent verification, model-swap regression discipline, the 15-tool-call rule, and protecting junior development.