From Solo Tool to Team Infrastructure: Scaling Gluon for Production

Part 4 of 4 in Gluon: Building an AI Agent Orchestrator series

Five developers. Twelve agents running in parallel. No one knows which agent just deleted a production config file.

That's not a hypothetical. That's what happens when you take a personal tool -- one developer, one machine, one set of tmux panes -- and hand it to a team without rethinking the infrastructure underneath. Everything I built in the first three posts works beautifully for one person. For five people, it becomes a liability.

Three months ago, I showed you Gluon running on my Mac mini -- a personal tool solving a personal problem. Today, it's evolved into production infrastructure for autonomous agents at team scale. That evolution forced me to rethink almost everything. Not the Claude integrations. Not the core orchestration loop. But everything around it: security isolation, cost governance, operational visibility, and how humans actually stay in control when AI agents multiply.

The question that drives this entire post: who's holding the leash when there are twelve leashes?

The Inflection Point

The transition from solo developer tool to team infrastructure is fundamental because the failure modes change completely.

When I'm the only user, I know my own tolerance for agent autonomy. I know which projects are sensitive. I know my budget. I can glance at a terminal and tell whether an agent is stuck or thinking.

A team of five doesn't have any of that shared context. New constraints surface:

- Security isolation: Each agent runs with capabilities. What stops one from corrupting another's work? What prevents accidental access to

~/.awsor production credentials? - Governance visibility: How do you enforce consistent autonomy policies across agents when every developer has different risk tolerance?

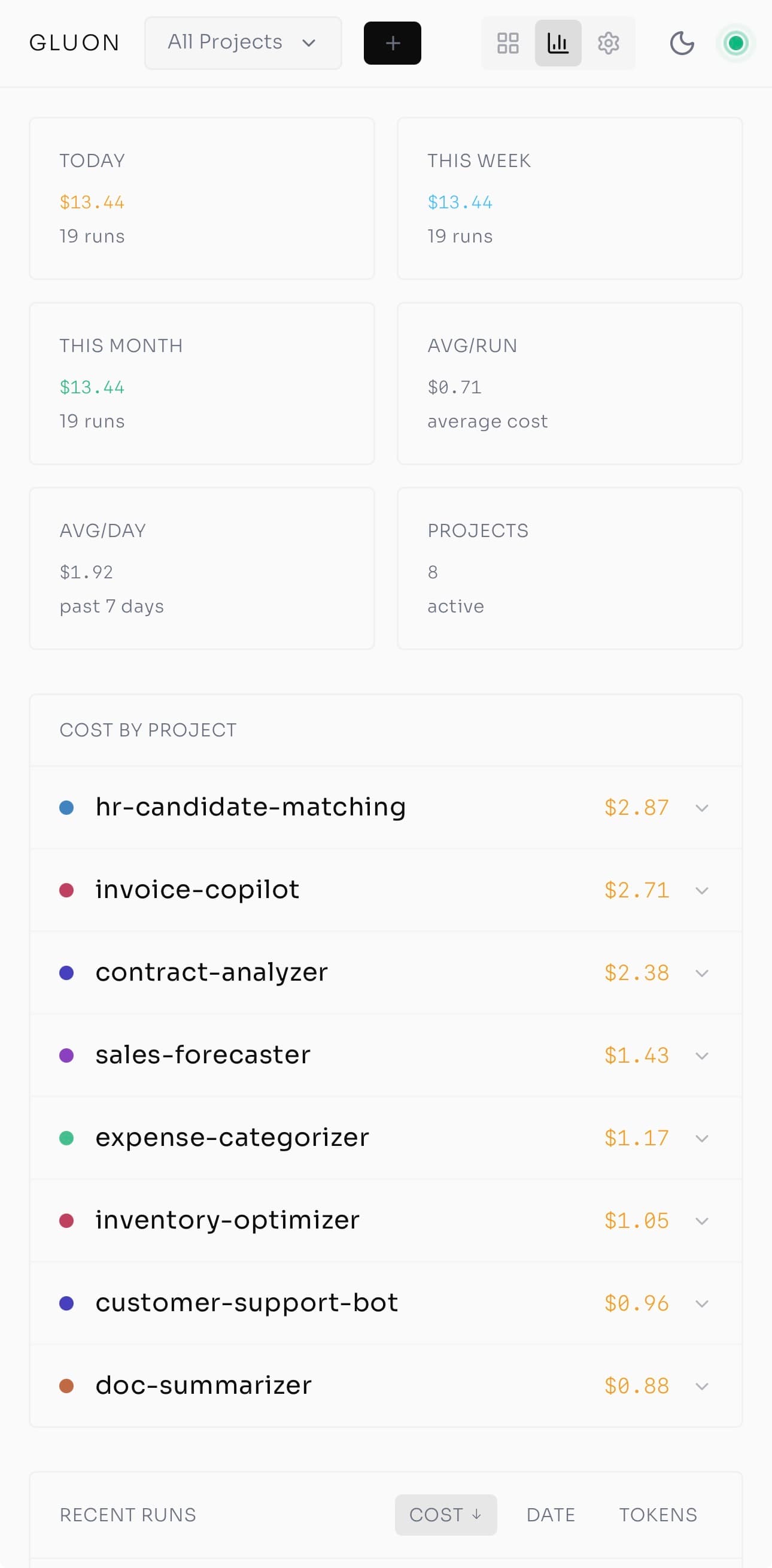

- Cost attribution: Solo development? One bill. Teams? You need to know which project, which agent, which user burned through the budget.

- Observability: Humans can't read logs in real-time at scale. They need signals that proactively tell them something's wrong.

- Failure recovery: When an agent gets stuck or the network fails, can it resume gracefully? Or does someone lose three hours of work?

Building for one is fundamentally different from building for many. Here's what changed.

Security & Isolation: Each Agent Gets a Sandbox

Autonomous agents wielding code execution tools are powerful and dangerous. Without isolation, one misconfigured agent can corrupt another's work -- or worse, touch production data.

That can't happen. Not once.

Gluon's security model is defense-in-depth. Each agent runs in an isolated OS-level sandbox with three enforced boundaries:

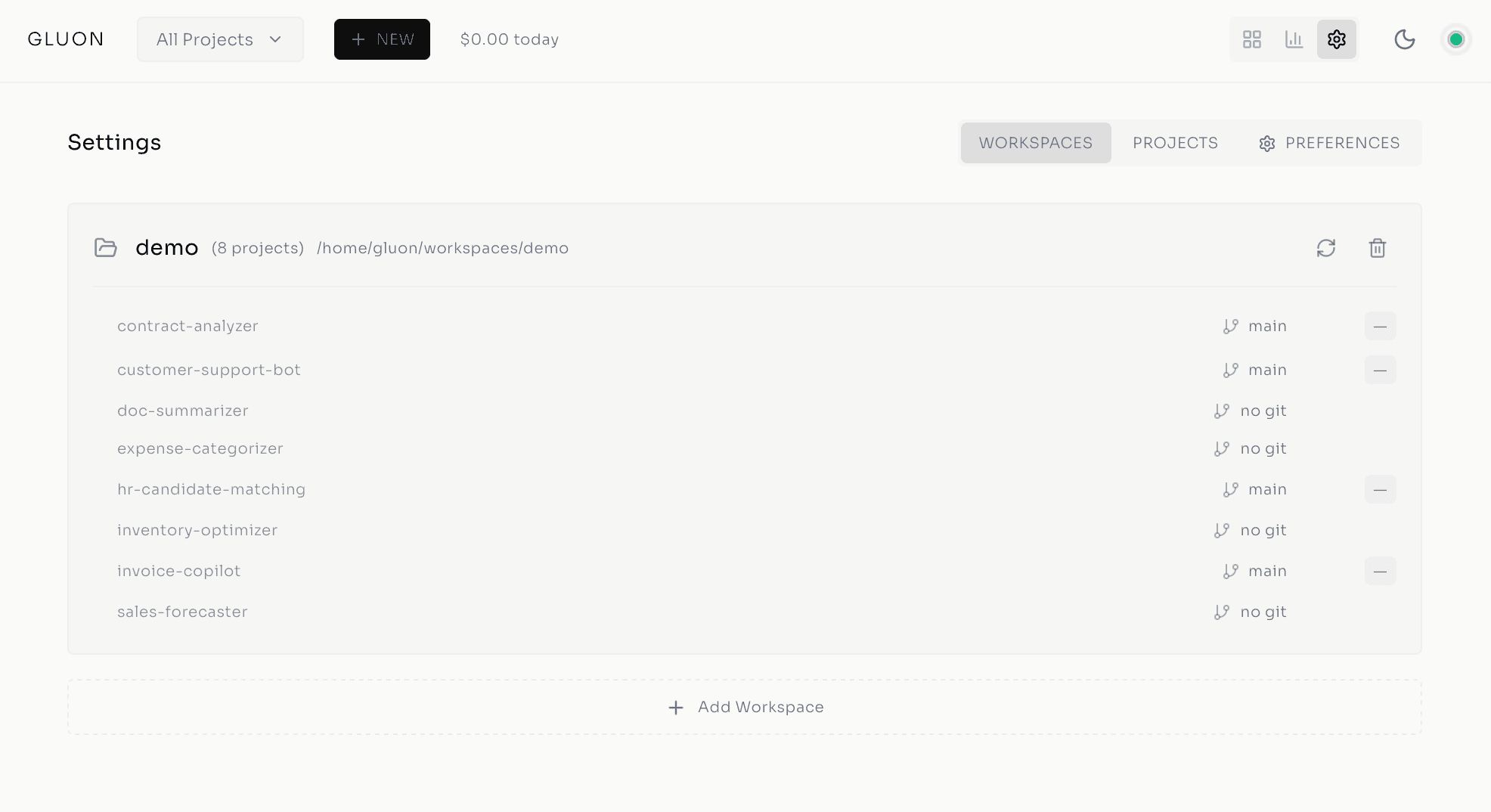

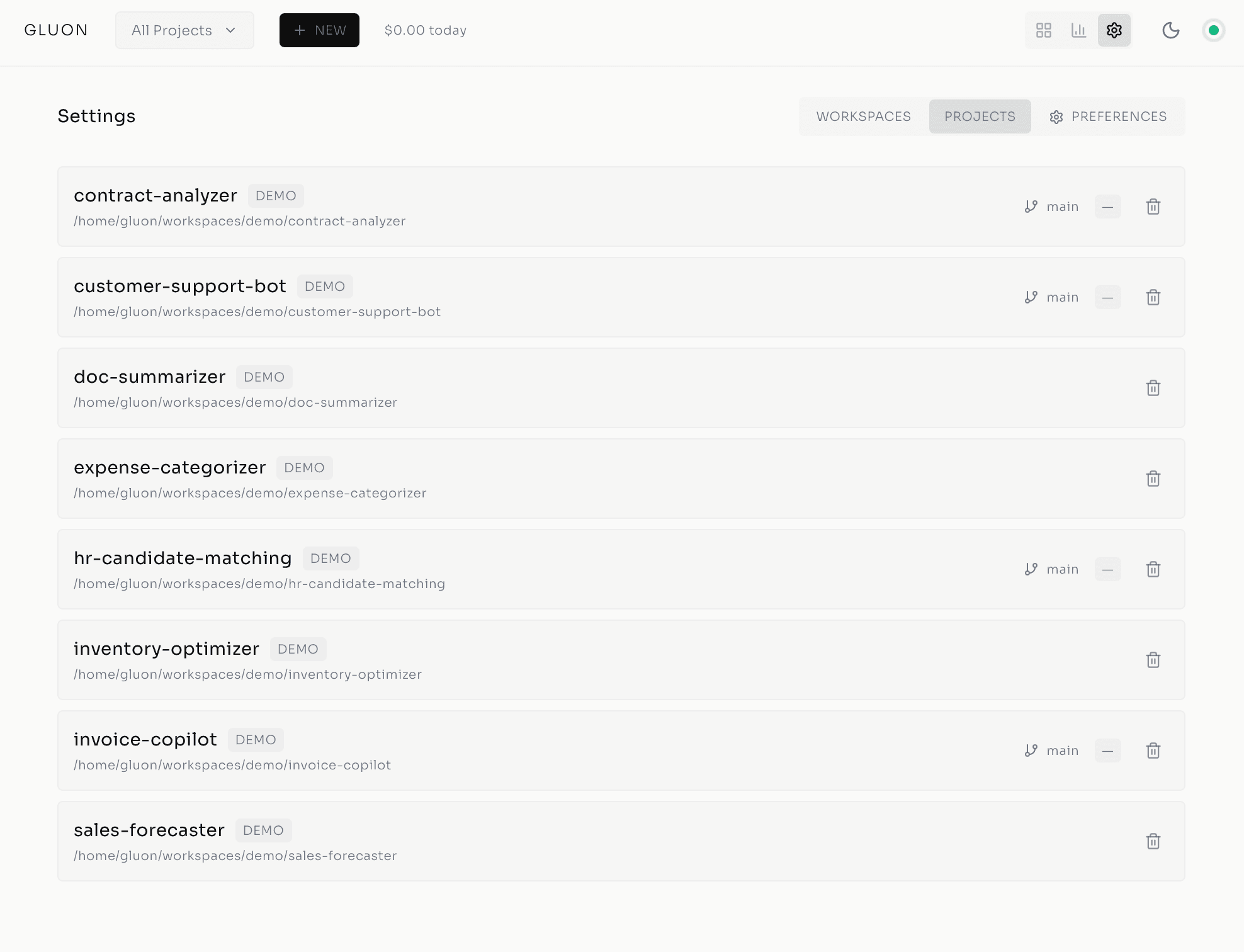

Filesystem sandboxing via bubblewrap (Linux) or sandbox-exec (macOS) restricts agent access to a git worktree -- the specific git branch created for that task. Agents can't escape the sandbox and touch ~/.aws, ~/.ssh, or your home directory. They work within their assigned desk. Period.

PUID/PGID support ensures agents inherit your host user permissions, not root. This is critical for Docker deployments. If an agent needs to run npm install in your project's node_modules, it can -- because it's running as you, not as an omnipotent root user. One layer of defense against privilege escalation.

Scoped volume mounts define exactly what the container can access:

~/.claude(read-write) -- Claude CLI credentials~/.gluon(read-write) -- Database, logs, images~/workspaces(read-write) -- Project source code~/.aws(read-only) -- AWS credentials for Bedrock API calls~/.config/gh(read-only) -- GitHub CLI configuration

Everything else is off-limits. No access to system binaries beyond what's in the container. No access to your personal documents.

Resource limits cap CPU and memory per agent: 8 CPU cores and 12 GB RAM by default (configurable per workspace). Prevents runaway agents from melting your infrastructure.

The result: a security model that enterprise teams actually need. When your engineers are orchestrating agents instead of writing code directly, you must guarantee agents can't interfere with each other or access what they shouldn't. Each agent gets its own sandbox. No exceptions.

Agent Teams & Parallel Coordination

Once agents are isolated, the next problem is coordination. How do multiple agents work on the same task without stepping on each other?

Claude Code's Agent Teams capability -- native to the Claude Agent SDK -- makes this possible. A lead agent spawns multiple subagents concurrently, each working on distinct subtasks in parallel, then synthesizes the results.

Picture implementing a feature: API endpoint, database schema, frontend form, and tests. Instead of one agent sequentially implementing each piece (and potentially introducing inconsistencies), you spawn four subagents:

- Subagent 1: Design and implement the API endpoint

- Subagent 2: Create database schema and migrations

- Subagent 3: Build frontend form with validation

- Subagent 4: Write comprehensive tests

They work in parallel. Gluon's SubagentTracker monitors start/stop events in real-time via agent hooks. The lead agent (running Opus for reasoning) synthesizes the results, validates consistency, and surfaces conflicts.

A feature that previously took one agent 4-6 hours now completes in 1-2 hours with higher quality. Parallelism at the agent level -- the same principle that made parallel human teams productive, applied to AI.

Best practice: structure prompts with 2-5 distinct subtasks, mention shared files subagents should reference, and end with explicit synthesis instructions.

This is the conductor metaphor from Workato: "AI agent orchestration coordinates across multiple AI agents so they can collaborate and carry out complex tasks. Without a conductor, you don't get beautiful music -- you get noise."

Without a conductor, parallel agents tangle. With one, they pull in formation.

Work Queue & Merge Queue: Task Orchestration

Coordinating multiple agents in parallel is one problem. Coordinating human workflows around those agents is another.

The work queue solves the "too many agents fighting for attention" chaos. Queue 10 bugs Monday morning. Gluon dispatches them across the week, respecting rate limits and cost caps. No babysitting. Items are batched, prioritized, and pushed to available slots in real-time. WebSocket updates keep the dashboard live.

The merge queue tackles a specific pain point: coordinating PR merges when agents generate pull requests. Multiple agents might touch overlapping files. Conflicts are inevitable. Traditional CI/CD blocks merges until someone manually rebases. Gluon's merge queue processes PRs sequentially with conflict detection. It shows exactly which files collided. One-click AI conflict resolution runs Claude on the rebase, resolving conflicts programmatically.

The workflow: queue, dispatch, run, review, done. Humans set policy. Agents execute. No context switching. No manual rebase drudgery.

Observability: The Witness Health Monitor

With work flowing through queues, teams need visibility into what's happening. But at scale, humans can't read logs. They need signals.

Enter the Witness Health Monitor -- a background process that classifies running agents into five states:

- Healthy: Normal progress, files changing, iteration advancing

- Slow: Making progress but below expected throughput

- Looping: Repeating similar actions, no forward movement

- Stuck: No file changes for five consecutive iterations

- Zombie: Process alive but unresponsive

Rendering diagram...

Each classification appears as a colored dot on task cards in the Kanban board: green, yellow, orange, red, gray. At a glance, you know which agents are humming along and which need attention.

Why does this matter? Because the most common failure mode in production AI isn't bad output. It's overwhelmed humans. Teams get flooded with thousands of daily approvals and log messages. Alert fatigue sets in. Someone switches to "auto-approve" -- and the careful governance you built evaporates. Witness turns that chaos into five colored dots and actionable signals. Five colored dots. One glance.

Natural Language Interfaces: From Terminal to Anywhere

Dashboards are great when you're at your desk. But teams live in Slack, Discord, and Telegram now.

Gluon's chat bots bring the full orchestrator to natural language. Telegram and Discord bots speak English (or whatever language you prefer). Behind them: Claude reasoning plus 40+ MCP tools covering project management, git operations, run management, work queue, merge queue, and system admin. Model selection via flags: --model opus for reasoning-heavy tasks, --model haiku for quick answers.

Real-world example: You're in a meeting. Someone mentions the bug-fix agent looks stuck. Flip to Telegram, type "Cancel run bugfix-12, resume with more aggressive search." Gluon handles it. No SSH. No terminal. No specialized knowledge.

The Witness colors appear in the chat. Cost tracking is available. Task creation, status checks, and conflict resolution all flow through the same interface.

Gluon is also a Progressive Web App. Install it on your phone. Full mobile dashboard. Tailscale tunnel for secure remote access. Monitor Ralph loops from a coffee shop. Cost caps, health indicators, and cancel buttons at your fingertips.

The biggest unlock here isn't the technology. It's that anyone on the team can hold a leash without being a terminal expert.

The Governance Gap

Here's where the narrative shifts from features to stakes.

All these systems -- isolation, coordination, visibility, chat interfaces -- address a single root problem: humans can't scale at the rate of AI. Forty-five billion non-human agent identities projected by end-2026. Only 10% of organizations have governance strategies.

The real villain isn't AI capability. It's the assumption that informal governance -- "we'll figure it out as we go" -- works when agents multiply faster than the policies governing them.

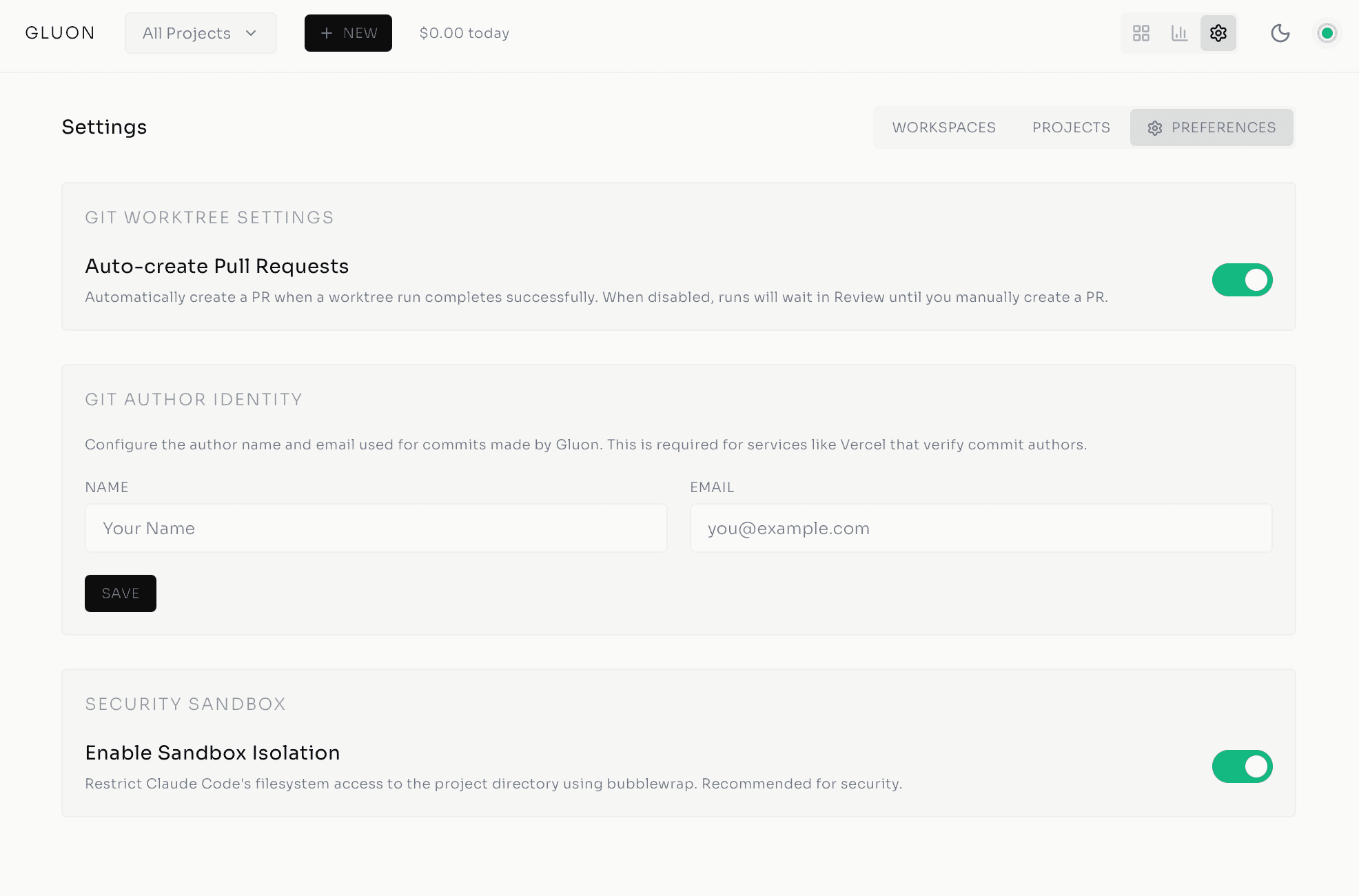

Gluon's answer is explicit governance:

Supervision policies define auto-resume behavior per task -- from aggressive (minimal checks, fast turnaround) through conservative (the default) to fully manual. Post 3 covered the details; the point here is that teams need consistent policies across all their agents, not ad-hoc decisions per developer.

Circuit breakers (the 3-state pattern from Post 3) stop runaway loops before they drain budgets. At team scale, this is non-negotiable -- you can't rely on someone watching every agent.

100% audit logging records every decision, every cost, every tool call. Compliance and accountability are built in, not bolted on.

Cost visibility tracks token spend, API calls, and cost-per-run. Cost caps are enforced. Agents with runaway expenses don't surprise you with a $5,000 bill.

And the critical detail: explicit exit signals. Ralph's design includes dual-condition checks -- both a COMPLETE status and an explicit EXIT_SIGNAL flag. Two conditions, not one. Because if you only check for "completion," an agent can get stuck in a loop claiming victory.

The leash from Post 3? At team scale, it becomes governance infrastructure. Every team member holds it the same way.

The Role Shift: From Code Writer to Orchestrator

Here's the honest admission I keep circling back to: my job has quietly stopped being writing code and started being deciding what gets built, by whom, and whether what came back is right. That shift isn't theoretical -- it's already how my week runs.

The skills shift accordingly:

- Advanced prompt engineering: Phrasing tasks so agents understand intent

- Systemic thinking: Designing agent workflows across multiple specialists

- PromptOps: Versioning prompts, monitoring agent behavior, tuning for quality

- Supervision design: Setting policies, guardrails, and exit conditions

Over half of companies expect to use AI orchestration by 2026. The market is signaling what practitioners already feel.

Gluon exists to make this pattern production-ready -- not just for enterprises with 500-person engineering teams, but for 5-person startups and solo developers coordinating agents across projects. The orchestrator doesn't care how big your team is. It cares that someone's holding every leash.

Who Holds the Leash

The architecture has come a long way from five tmux panes — sandboxes, agent teams, work and merge queues, the Witness monitor, chat interfaces, explicit governance. But all of it serves the one principle I started with: humans stay in control while Claude agents do the work, and the means of staying in control has to scale with the team, not just with one developer who can hold the whole picture in his head.

Gluon is open source under the MIT license: github.com/carrotly-ai/gluon-agent. Version 0.8.0, Python 3.12+, Docker-deployable, 80+ REST endpoints, 40+ chat tools, 50+ CLI commands. I didn't want to build this behind a SaaS paywall — teams should own their orchestrator. If you're running Claude agents today, fork it and make it yours.

The tmux chaos of three months ago feels like ancient history. But the question it raised — who's watching the agents? — only gets more urgent as the agents multiply. That's the question I'd keep asking long after the dashboard stops feeling new: not whether the agents can do the work, but whether anyone is still in a position to catch it when they quietly don't.

Series Navigation

- Post 1: From tmux Chaos to AI Agent Orchestration

- Post 2: Inside the Cockpit

- Post 3: Ralph Loop — Autonomous Execution

- Post 4: From Solo Tool to Team Infrastructure (you are here)

The Cutler.sg Newsletter

Weekly notes on AI, engineering leadership, and building in Singapore. No fluff.

The 30 Principles for Agentic Engineering — Part 3: The Harness

Principles 15–20. The harness configuration that keeps the kernel and lifecycle cheap: CLAUDE.md under 200 lines, hooks for real incidents, skills that auto-invoke, subagent isolation, pinning, and Stage 5 distribution.

Standardise the Harness, Customise the Work: The 5-Layer Agent Architecture

Three open-source extractions converged on the same five layers. The architecture isn't a vendor narrative — it's a discovered structure. Here's the decision rule that keeps you from over-engineering it.

The 15-Tool-Call Rule: Where Agent Quality Falls Off a Cliff

Practitioner consensus puts the cliff around fifteen tool calls per prompt. Here's why agents degrade past that, and the three operational rules that keep them on the safe side.