Inside the Cockpit: How Gluon Turns AI Agents into a Managed Workflow

Part 2 of 4 in Gluon: Building an AI Agent Orchestrator series

The Question That Kept Interrupting

Four agents running. Auth bug. API refactor. Tests. A fourth — honestly, I'd scrolled through too many logs to remember what it was doing.

Every few seconds, the same question: is it still running?

Not "is the code good?" Not "did it pick the right approach?" Just: is it still running? The most basic question you can ask about a process, and I couldn't answer it without switching tabs and scanning walls of terminal output.

That single question — is it still running? — is the difference between running agents and orchestrating them. The first is an experiment. The second is a workflow.

Why Cockpits Exist

In aviation, pilots handle the flying. The cockpit crew coordinates everything else — fuel, course, radio traffic, weather. Multiple instruments feeding information to one place. Multiple people, one unified picture.

That's what was missing. Not smarter agents. A better cockpit.

Centralized information — one dashboard instead of scattered terminals. Where are the agents? What are they doing? How much have they cost? What needs attention? Isolated actions — each agent works independently; git worktrees ensure one can't delete another's work. Real-time awareness — tool calls visualized as they execute, costs ticking up live. Multiple control surfaces — CLI for terminal workflows, web dashboard for visual oversight, Telegram for phone checks, Discord for team coordination, PWA for mobile. Same orchestrator, many surfaces. Built-in safeguards — cost caps, iteration limits, circuit breakers that stop agents from looping forever.

From Intent to Execution in 30 Seconds

Let me walk you through what actually happens when I start work.

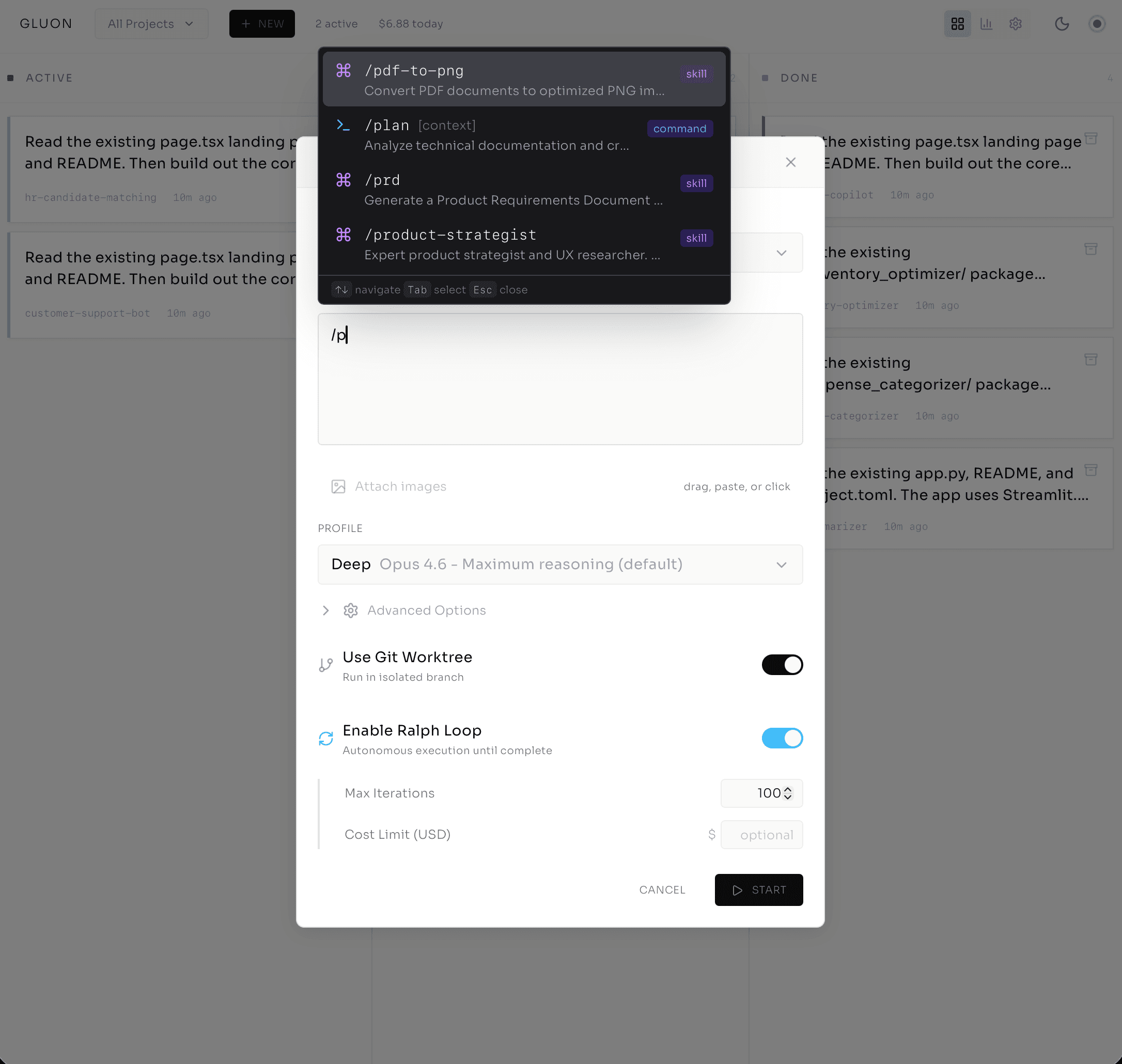

I open the web dashboard and click the plus icon. A task creation dialog appears.

First: project selector. auth-service, grouped by workspace — this matters when you're working across team codebases.

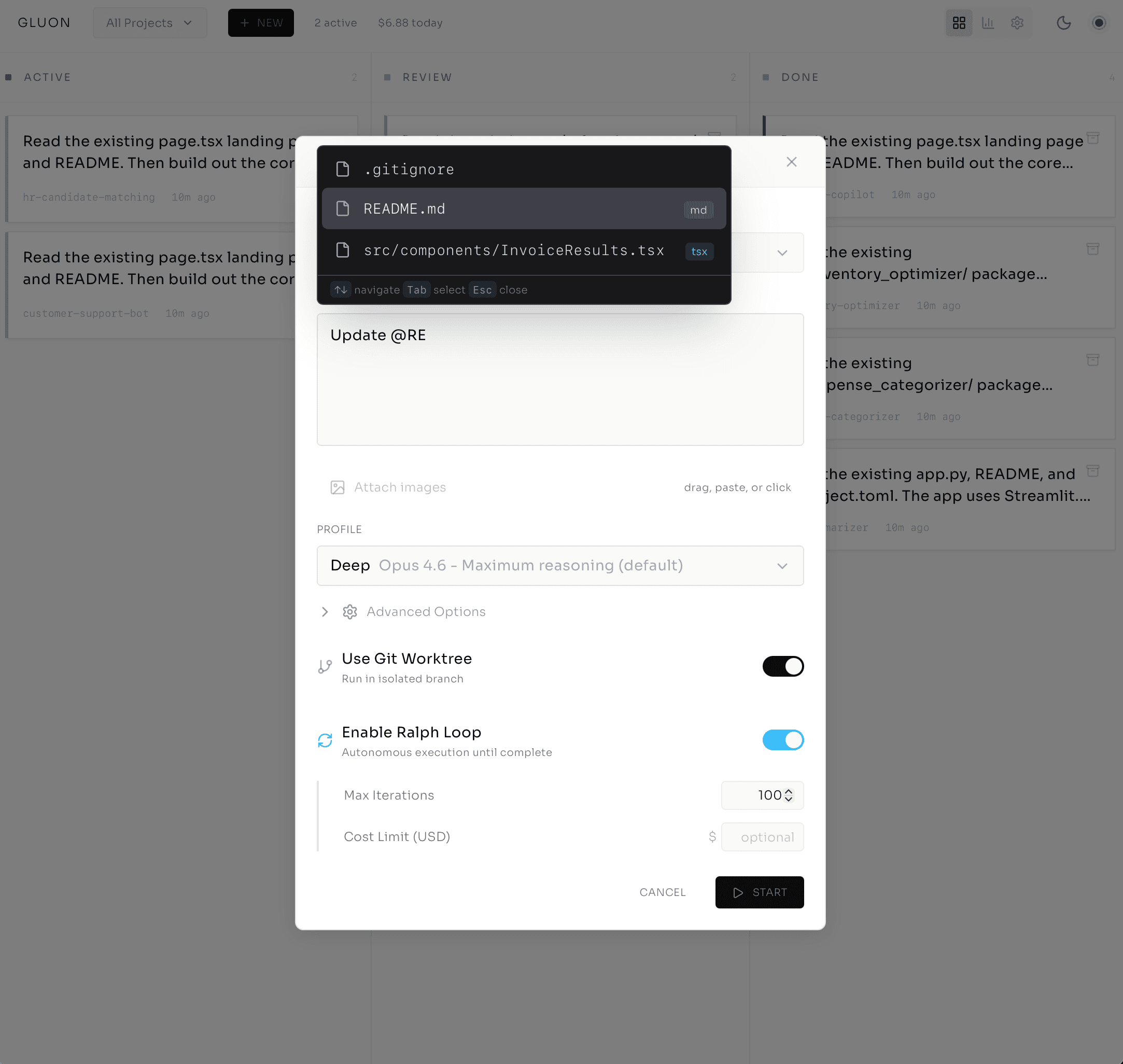

Then: describe the work. I type /fix-auth-bug and Gluon autocompletes. Intent clear. But I add more: @src/auth/signup.ts. That file gets included in the agent's context window — no guessing about which file to look at. I've pointed directly at what matters.

Now: profile selection. This is where model choice becomes a cost decision you make once, not a bill you discover later.

- Quick uses Haiku — fast, cheap. For simple fixes.

- Standard uses Sonnet — balanced. My default.

- Deep uses Opus — maximum reasoning for complex refactoring.

- Planning uses Opus with explicit plan-before-execute mode.

I pick Standard. Toggle on Git Worktree for isolation. Ralph Loop stays off — I want to watch this one before letting it run autonomous. I can attach an image if needed: screenshot of the bug, error logs, anything visual.

One click. Task created. Within 30 seconds, the agent is spinning.

And here's the part that changed how I work: I can watch it happen live.

Watching the Work — Not Waiting for It

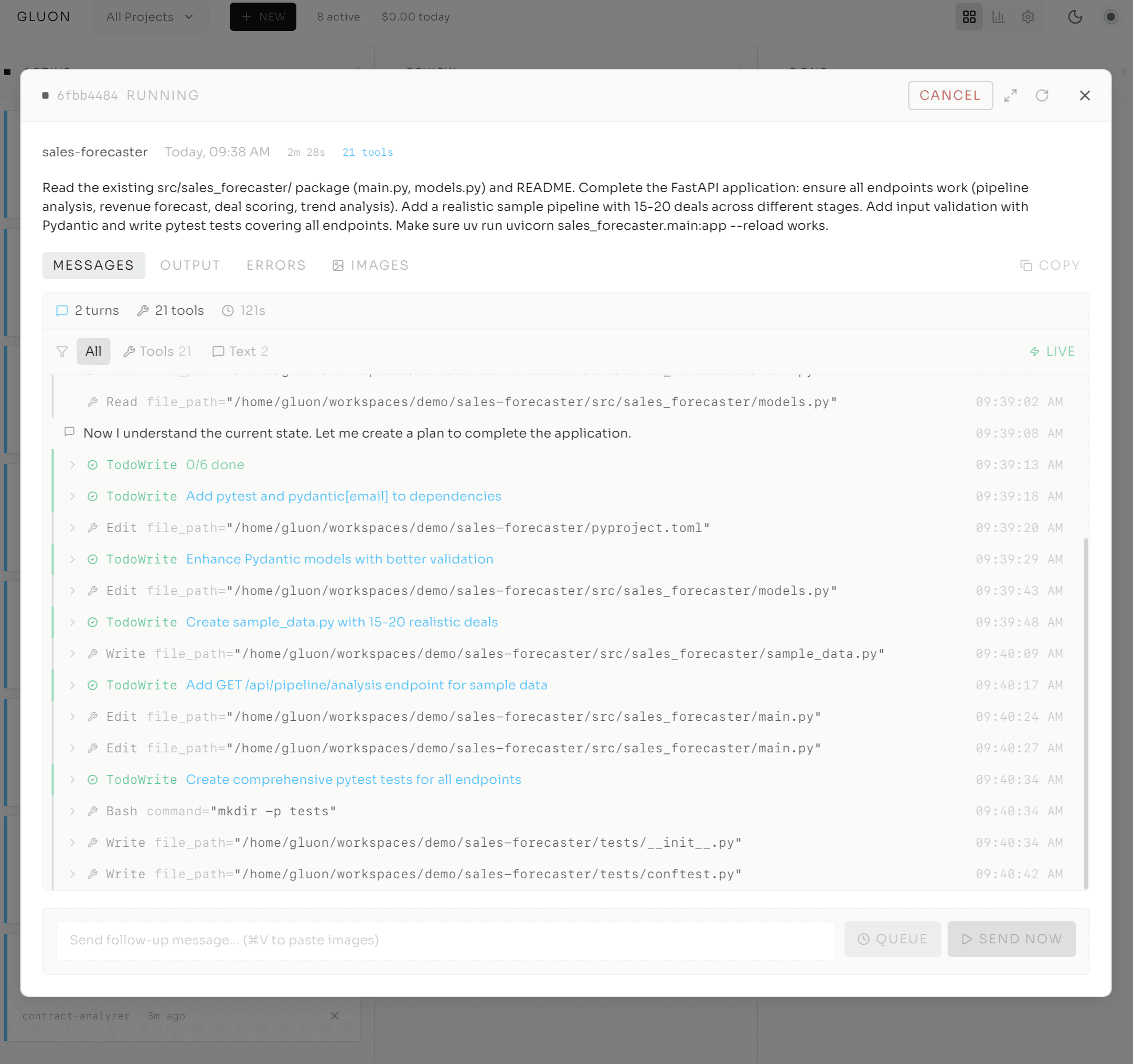

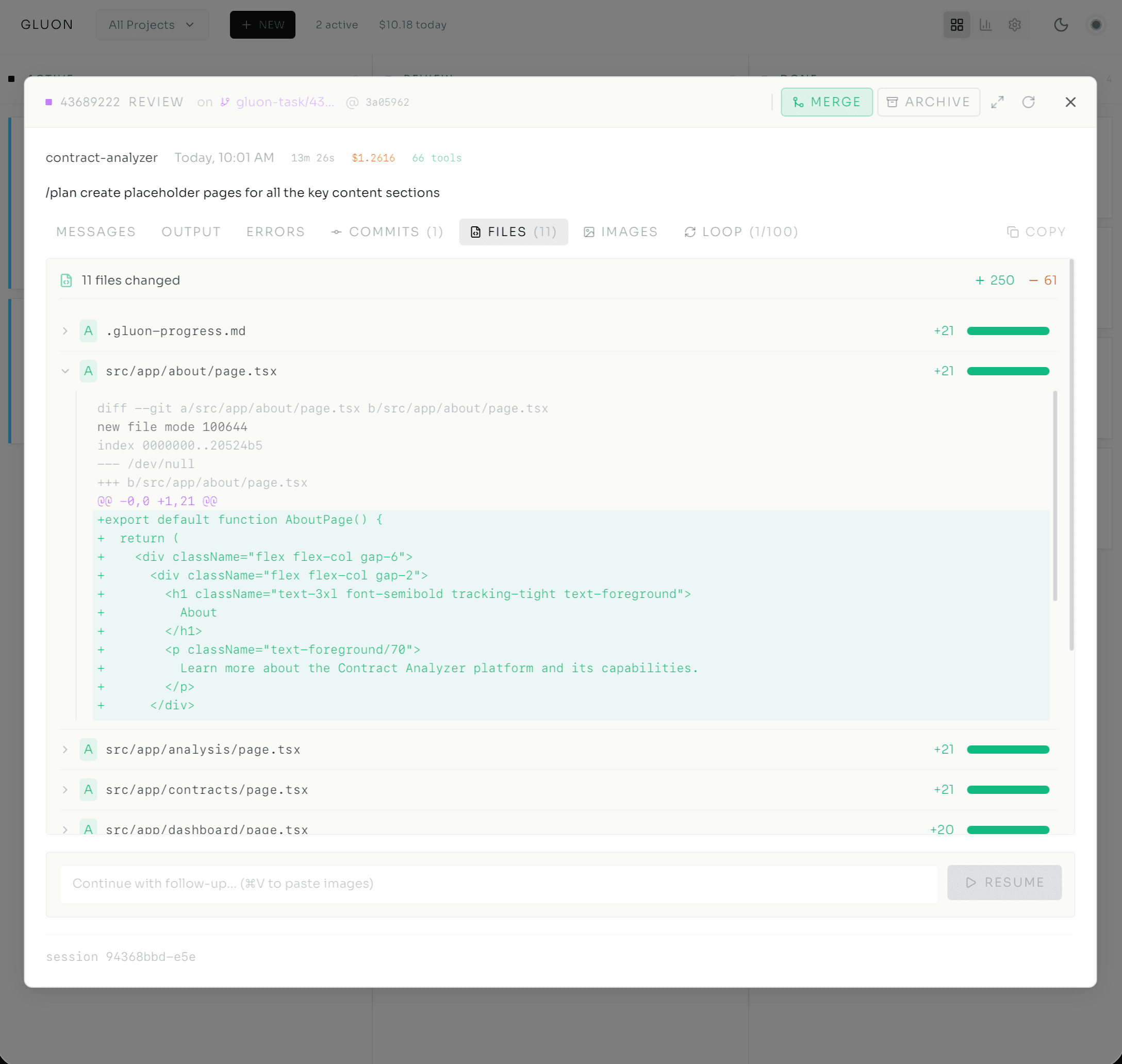

The task opens in the Run Details modal. Tabs across the top: Output, Errors, Messages, Commits, Files, Attachments, Loop, History. I'm on Output.

The agent's thinking streams in real-time. No polling. No refresh button. WebSocket connection — I'm seeing what the agent does as it happens.

It reads the signup file. Identifies the bug — line 42, the email validation regex doesn't handle new TLDs like .cloud. Writes a test case that reproduces the error. Each step appears in the stream:

[TOOL CALL] file_read: src/auth/signup.ts (2.4KB)

[TOOL CALL] bash: npm test -- signup.test.ts (failed, as expected)

[TOOL CALL] file_write: src/auth/signup.ts (lines 40-45 modified)

[TOOL CALL] bash: npm test -- signup.test.ts (passed)

No guessing. No terminal tab-switching. I'm watching a developer work — reading the file, writing a failing test, fixing the code, watching the test pass. Except this developer runs at machine speed and costs $0.47.

And while it works, a cost counter ticks in the corner. $0.02. $0.04. $0.07. Financial transparency in real-time, not a surprise on the invoice. Is it still running? Yes — and I know exactly what it's doing and what it costs.

I can filter by tool type: file operations, bash calls, errors only. Pause auto-scroll to read carefully. Expand tool calls to see inputs and outputs. If the agent needs guidance, an "Input Required" badge appears and I jump in via the resume flow.

Other systems: submit a job, get a result, sit in the dark. Gluon puts you in the cockpit. You're not waiting — you're witnessing.

The Architecture That Protects Everything

Here's the decision that makes all of this safe enough to trust.

Rendering diagram...

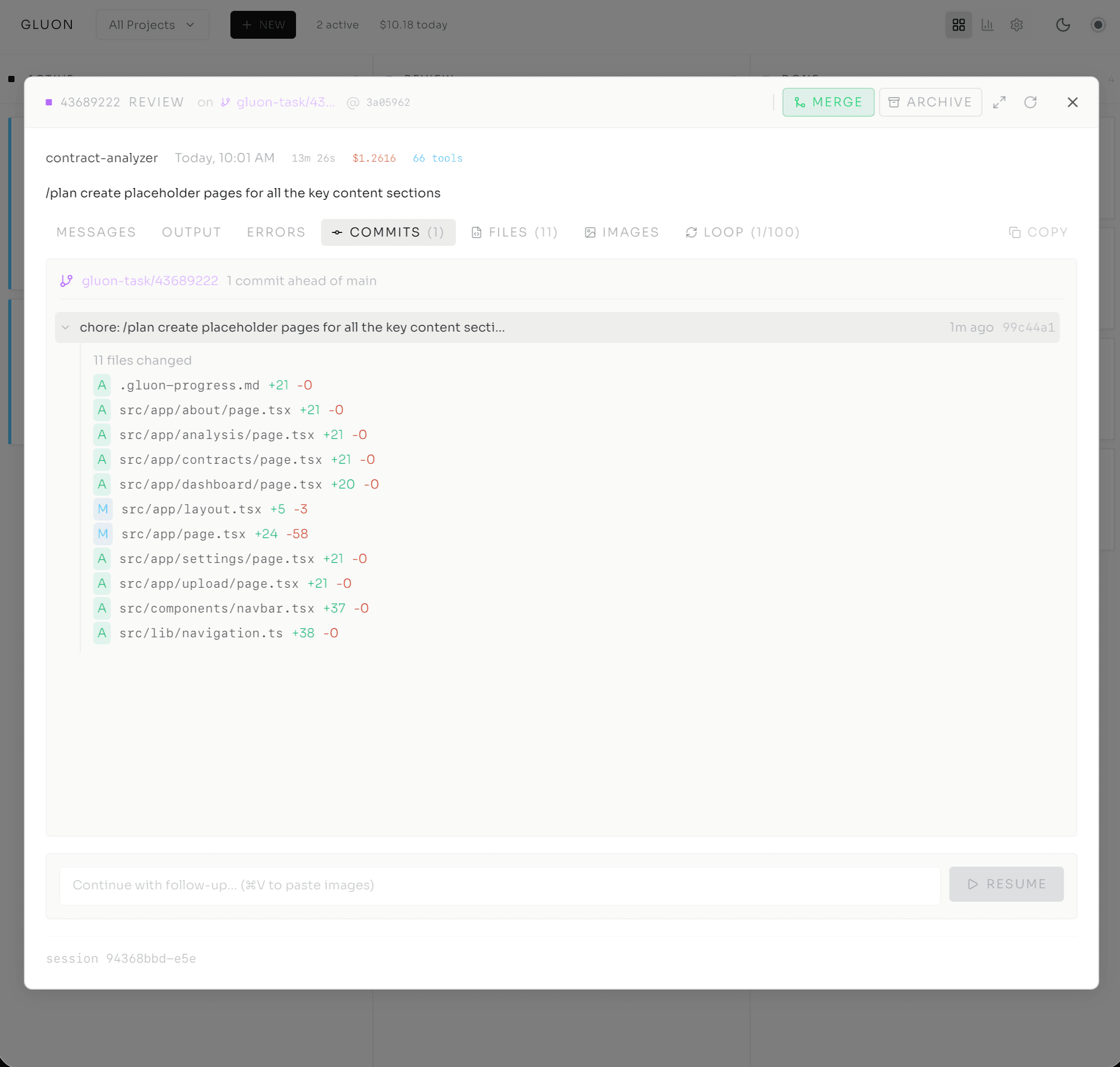

Each task runs in /tmp/gluon-worktrees/wt-{run_id}/ — a temporary git worktree, a lightweight clone of your repository that's completely isolated. The agent works in that worktree. It makes changes. It commits. It pushes. But it's working on branch gluon-task/{run_id} — a branch unique to that task.

Main stays clean. Untouched.

If something goes catastrophically wrong — if the agent makes a terrible decision — its damage is confined to its own branch. Delete the worktree, move on. Compare this to agents with no isolation. The Replit AI agent? No isolation. It deleted the entire production database. With Gluon, worst case: you lose the worktree.

Before each task starts, Gluon syncs: fetch from remote, fast-forward if behind, auto-commit any uncommitted changes in main. After the task completes, Gluon stages all changes, commits, and pushes. Rebase conflicts get handled automatically. The agent's work lands safely on its own branch, ready for review.

The isolation extends to parallel work. Agent One works on auth in wt-abc123. Agent Two works on the API in wt-def456. They're completely invisible to each other. Zero interference. Zero risk of accidentally merging one agent's changes while another is still running.

This is how you scale from one agent to many. Not by hoping they don't collide — by making collision impossible.

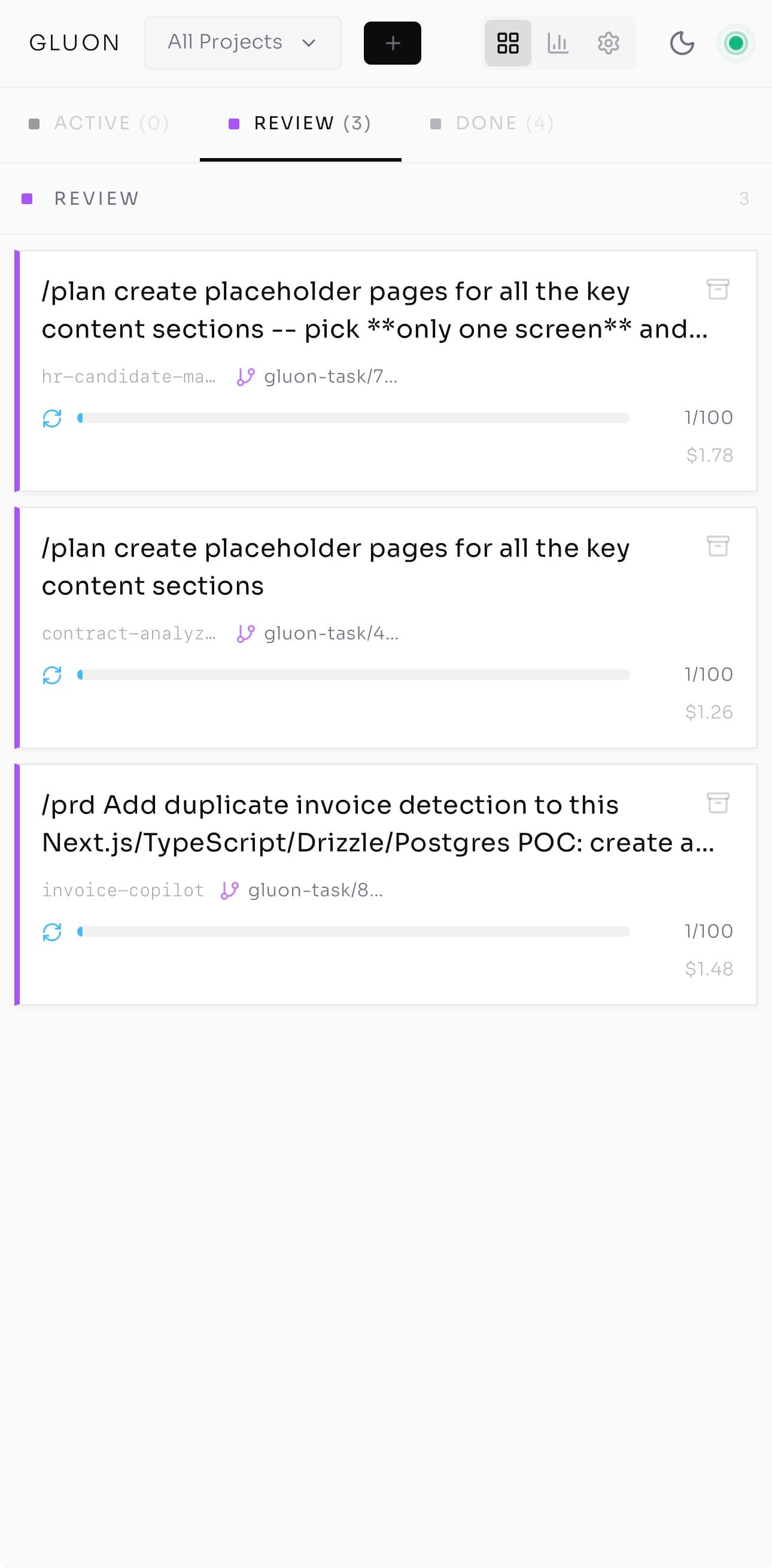

The Kanban Board — One Screen, Many Pilots

Four columns: Queue (waiting), Running (executing), Review (waiting for human decision), Done (complete).

Each task is a card. At a glance:

- Task title and project

- Profile icon — which model (Quick/Standard/Deep/Planning)

- Progress bar for multi-step formulas ("Step 2/4 Implement")

- Health dot — green (healthy), yellow (slow), red (stuck or looping)

- Input Required badge if the agent is waiting for approval

My board right now: one task in Queue — a feature request. Two in Running — the auth bug (green dot, healthy) and an API refactor (yellow, slower than expected but progressing). One in Review — yesterday's test-writing task. One in Done — ready for PR.

Drag tasks between columns to manually pause or promote. Click any card for full Run Details.

This is the shift from chasing work across terminals to making decisions from a single screen. Not running agents — orchestrating them.

Deep Diagnostics — Everything About a Task

Click a task card. The modal opens with tabs that go deep.

Output — streaming logs. Errors — filtered error messages with stack traces. Messages — full conversation history, expandable tool calls, inline screenshot thumbnails. Commits — every commit during the run, with author, timestamp, message, and files changed. Click for full diff. Files — all changed files with line counts and inline diff viewer. Attachments — captured images, diagrams. Loop — Ralph iteration progress, circuit breaker state, safety metrics. History — related runs in chronological order.

I click Commits for the auth bug. Three commits:

- "Add test case for email validation with new TLDs"

- "Fix regex pattern to support .cloud, .app, .dev"

- "Add test for edge case: nested subdomains"

The first commit shows tests added, assertions clear, tests failing as expected. The second shows the regex change — old pattern handled only standard TLDs, new pattern handles arbitrary extensions. Tests pass.

Everything I need to make a decision — merge or refine — right there. No switching to a terminal. No cloning branches to inspect locally. The cockpit has the instrumentation.

The Numbers You Actually Need

Cost tracking sounds mundane until you realize the difference between model tiers is 25x.

- Haiku: ~$36K/year running continuously

- Sonnet: ~$180K/year

- Opus: ~$900K/year

That's not a rounding error. That's the difference between a viable workflow and an unsustainable one. So you need to know — per task, per project, per day — what you're spending.

The Costs dashboard shows real-time spend:

Today: $4.32 (five tasks). This week: $14.78. This month: $32.11.

Click into a project:

auth-service: $8.94

- Task 1 (Standard, Sonnet): $0.47

- Task 2 (Deep, Opus): $1.23

- Task 3 (Quick, Haiku): $0.03

Each task shows the model and exact cost. And I can set a cost cap on any task with --max-cost. Set it to $5, the agent stops at $5. No surprise bills. No runaway loops draining the budget.

If you're running agents without cost visibility, you're flying without a fuel gauge. You'll find out you're empty when you crash.

Same Orchestrator, Every Surface

Here's the architectural move that made everything click.

I submit a task from the web dashboard. Thirty seconds later, a coworker checks status via Telegram. Same task, different interface. He types /logs and sees the real-time stream inline.

That night on the couch: I pull up Gluon on my phone. It's a PWA — installable like a native app. Pull to refresh. Same Kanban board, responsive. The task from this morning shows green.

At my desk: CLI. gluon logs {run-id} -f streams the same logs to my terminal.

One SQLite database. One FastAPI backend. One WebSocket layer. Whether you're in CLI, web, Telegram, Discord, or PWA — same system. And because the interface layer is decoupled, adding a new surface doesn't touch the core. Slack bot? Route through the orchestrator. Email? Same. Custom webhook? Same.

Wherever I am — desk, couch, coffee queue — the board is one tap away.

The Question, Answered

After enough real work had run through the board, I noticed I'd stopped asking the question I opened with — is it still running? Not because it stopped mattering, but because the answer was always one glance away. Green dot, yellow dot, red dot. The cockpit answers before you ask.

That foundation is what makes Part 3 possible: autonomous loops. The Ralph Loop — when you stop monitoring each step and let the agent decide for itself, bounded by a cost cap and an iteration limit, running until the work is done.

That's when orchestration becomes transformational. But it only works if you trust the cockpit first.

Series Navigation

- Post 1: From tmux Chaos to AI Agent Orchestration

- Post 2: Inside the Cockpit (you are here)

- Post 3: Ralph Loop — Autonomous Execution

- Post 4: From Solo Tool to Team Infrastructure

The Cutler.sg Newsletter

Weekly notes on AI, engineering leadership, and building in Singapore. No fluff.

From Solo Tool to Team Infrastructure: Scaling Gluon for Production

When I first built Gluon on my Mac mini, I was solving a personal problem: monitoring Claude agents without losing my mind to tmux logs. But when teams join the picture, everything changes — security, governance, observability, and the fundamental role of the developer. Here's what production infrastructure for autonomous agents looks like.

The 30 Principles for Agentic Engineering — Part 3: The Harness

Principles 15–20. The harness configuration that keeps the kernel and lifecycle cheap: CLAUDE.md under 200 lines, hooks for real incidents, skills that auto-invoke, subagent isolation, pinning, and Stage 5 distribution.

The 15-Tool-Call Rule: Where Agent Quality Falls Off a Cliff

Practitioner consensus puts the cliff around fifteen tool calls per prompt. Here's why agents degrade past that, and the three operational rules that keep them on the safe side.