This post is a bit of a brain-dump detailing exactly how/what/why, if you just want the juicy bits skip to the bottom 😉

I’ve been a loyal user of the Apache Web Server for some 10 years. Working on everything from custom server modules (in C) all the way through to bog-standard use cases like reverse proxying and LAMP stacks. Up until now if I had to do anything web related I’d probably run it on Apache or behind it (proxied).

Recently I started work on a small project that I plan to deploy across a number of small (256M) virtual machines. Looking for the best-bang-for-buck I decided Apache is too heavyweight for my needs – it was time to look at the other options. I was already curious about the growing popularity of Lighttpd and NGINX and decided to look into them both more seriously.

Lighttpd has a huge following and is used by everyone from YouTube to Wikipedia. It is very different from Apache and things like configuration and URL rewriting are not easily ported. Knowing that I was going to need a backend (Perl, Python, PHP) I looked at the options and searched discussion forums. There seemed to be a consensus that NGINX was more stable and less leaky than Lighttpd so I moved on.

NGINX seemingly got off to a slow start, it was apparently lacking in English documentation and community. However over the past few years it has really started to take off and according to Netcraft its now the third most popular web server software (behind Apache and IIS). It supports FastCGI, sCGI and uWSGI backends, has a low memory footprint and is reportedly rock-solid.

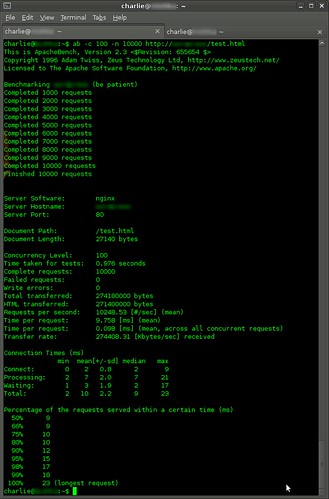

I decided to take NGINX for a spin on my tiny virtual machine. Installation under Fedora 14 (x86_64) was straight-forward enough – it’s a package included in the Fedora distribution. Configuration was simplez, tweaking the default template seemed good enough for starters. Ok so my VM is up and running, time to whip out ApacheBench.

None of these tests were conducted in a particularly scientific way, take the results with a pinch of salt. Each test was repeated at least 3 times and the results here are the average.

Test 1. NGINX default configuration serving static files

I created a simple “index.html” file (13 bytes long) and used ApacheBench to load 100 concurrent connections with a total of 50,000 requests.

6,362.94 requests / sec

Ok seems like a good start, so what about dynamic content, first stop was to try FastCGI. I used spawn-fcgi and fcgiwrap to run a simple Perl script. In fact I liked fcgiwrap so much I forked it on Github and added a spec file to build the RPM’s on Fedora/Redhat/CentOS.

Test 2. NGINX default configuration with FastCGI to simple Perl script

The perl script was just returning a fixed string, I was primarily trying to measure the impact of running the script versus doing anything complicated within it. With the same parameters as before;

1,222.57 requests / sec

Not bad I thought to myself so I continued work in implementing a simple API function in Perl and calling it through this route.

Test 3. NGINX default configuration with FastCGI to my API Perl script

Nothing too complicated, accept a couple of parameters and spit out some data. No databases, files or anything else to worry about essentially I was just blurting out environment variables.

183.76 requests / sec

Holy sh!t, I wasn’t doing anything *that* complicated – it can’t be right. Hmmm, well I am using a couple of Perl modules to do my evil bidding – ok I will implement it myself. No improvement, even with my 31337 Perl skillz I was unable to make much improvement.

So I went investigating where the problem was and it essentially boiled down to “CGI is retarded“. Spawning UNIX processes for each request is probably not the best idea in the world and will always be slow. Well I knew that but anyway, moving on.

I took a look at uWSGI a Python application container with bindings to NGINX. I was loathed to go down this route for the odd ad-hoc script I wanted to run and shifted it to the back burner. As much as the puritan software engineer voice in my head screamed “NOOOOOO“, next up PHP *cough*…

I haven’t dabbled much with PHP since benchmarking my WordPress installation and seeing it barely serve 15 requests per second while crippling a quad core behemoth of a server. Working around these issues with addons to WordPress (WP-Super-Cache etc.) worked as a temporary solution but I’ve moved over to MoveableType now.

Grabbing the “php-cli” package for Fedora gives you the “php-cgi” binary which implements FastCGI protocol. Hook it up (over a UNIX socket) to NGINX and you are away. Initial results were impressive;

Test 4. NGINX default configuration with FastCGI (PHP) to a simple script

After wrestling with spawn-fcgi to get it to launch php-cgi and hook it all together I just quickly knocked up a Hello World script in PHP to prove a point. I was astonished with the first results;

1,657.60 requests / sec

Keen to test it out for real I re-implemented my noddy API in PHP and ran more extensive tests. I started to hit a wall after about 15,630 requests (almost exactly each time) ApacheBench would timeout and NGINX would become unresponsive. Nothing exciting in the error logs, restarting spawn-fcgi and nginix seemed to fix the problem for another 15,630 requests.

After googling I found quite a few people reporting other odd results, and resorting to wrapping php-cgi with additional gubbins to restart it automatically etc. Favouring a real solution rather than a band-aid I stumbled across PHP-FPM (FastCGI Process Manager) and rave reviews about it. PHP-FPM used to exist as a set of patches to PHP but was recently merged into version 5.3, it has to be explicitly enabled in the build and unfortunate

ly it wasn’t for the Fedora packages.

Go-go-gadget yumdownloader, I downloaded the source “php-5.3.6-1.fc14.src.rpm” and set about hacking the spec file. After a bit of scouting I decided the best way to do it is add a separate build stage “build-fpm” to the rpmbuild and add the resulting binary “php-fpm” to the “php-cli” package along side its “php-cgi” brother. I posted the hacked spec file and some premade RPM’s on Github for all to see.

Test 5. NGINX default configuration with FastCGI (PHP-FPM) to my API script

After all the effort I was sorely disappointed to see the same performance and timeout issues.

1,444.93 requests / sec

Back to googling and much frustration after feeling certain PHP-FPM was the solution to my problems. It was only then I realised maybe neither NGINX or PHP were to blame. Sure enough my /var/log/messages was full of messages like “nf_conntrack: table full, dropping packet.” and “TCP: time wait bucket table overflow”. That might explain it…

After a bit more searching I found a solution with a mod to iptables. Firstly, drop and stateful rules like;

/etc/sysconfig/iptables:

-A INPUT -m state –state ESTABLISHED,RELATED -j ACCEPT

-A INPUT -m state –state NEW -m tcp -p tcp –dport 22 -j ACCEPT

Instead replace them with a straight-forward;

-A INPUT -m tcp -p tcp –dport 22 -j ACCEPT

Next, we need to tell iptables not to do connection-tracking. I chose to frig the start() in my /etc/init.d/iptables script to do this but there’s probably a better way to do this.

# HACK

iptables -t raw -I OUTPUT -j NOTRACK

iptables -t raw -I PREROUTING -j NOTRACK

At the same time I was reading some NGINX optimisation advice and did the following,

worker_processes 4;

worker_rlimit_nofile 32768;events {

worker_connections 8192;

use epoll;

}

With these tweaks in place and keen to test it out…

Test 6. NGINX tweaked configuration with FastCGI (PHP-FPM) to my API script

Just the iptables and NGINX configurations were tweaked and the tests were repeated after a reboot to ensure they were all persistent.

3,671.92 requests / sec

That’s more like it and not a single lockup or timeout in 50,000 requests. So I upped the ante and did 500,000 requests, solid as a rock around ~3,500 requests per second. Upping the request count to 5,000,000 to really burn it in (this time with 250 concurrent requests) and again ~3,500 requests per second.

CPU load on the VM was noticeable but its just a small 256M slice and I was amazed to get this much mileage out of it. Lastly, I wanted to see how the static file performance changed after these tweaks.

Test 7. NGINX tweaked configuration serving static files

Back to my little 13 byte “index.html” file, this time the performance was beyond my wildest dreams…

24,265.55 requests / second

Yup, thats 24 THOUSAND requests per second, on a crappy little VM slice. The network interface was only doing about 5MB/sec it was the CPU that was choking it from doing more.

That’s the performance I was looking for, blisteringly fast static file serving and awesome dynamic content serving. I was quite astounded at the different from a traditional LAMP stack and will definitely use this new approach to any high-traffic applications in the future.

Leave a Reply